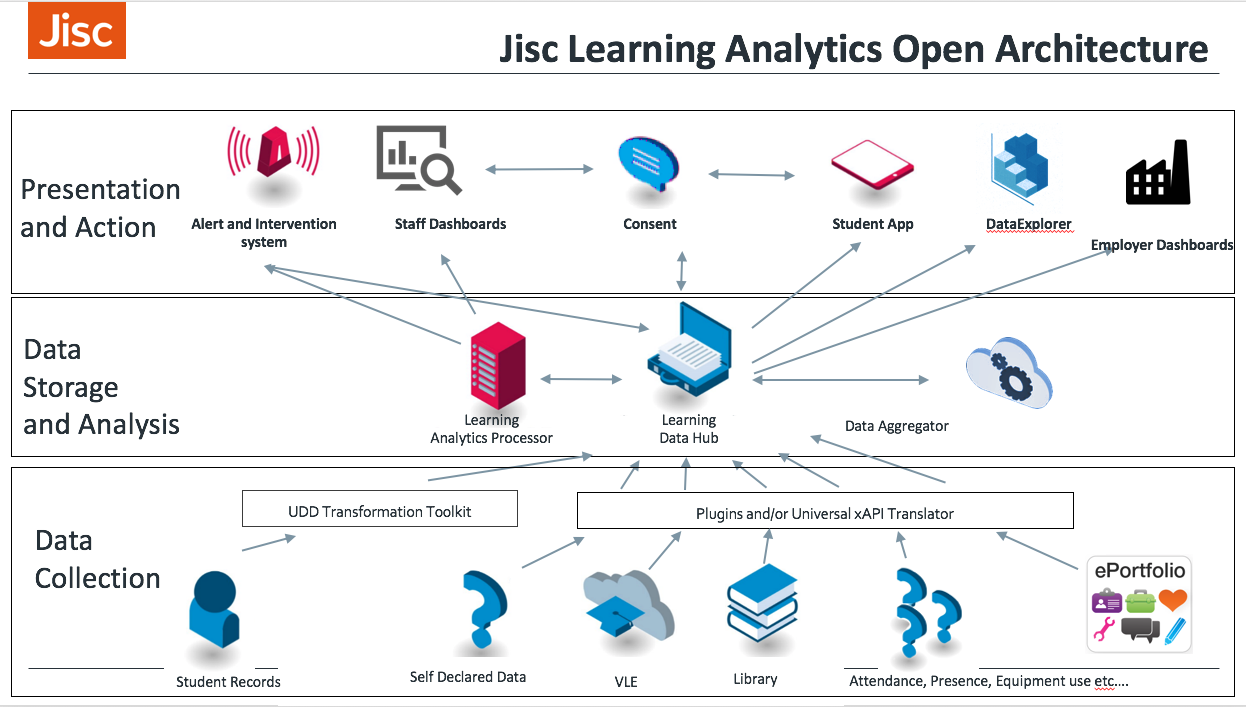

What’s an ADDR? Luckily it is not Viper Beris, Britain’s only venomous snake but the Jisc Apprentice Digital Delivery Review. The ADDR aims to help universities, colleges and skills providers ensure that they have the necessary digital processes, practices and infrastructure in place to take advantage of the new landscape (in England at least) surrounding apprenticeships.

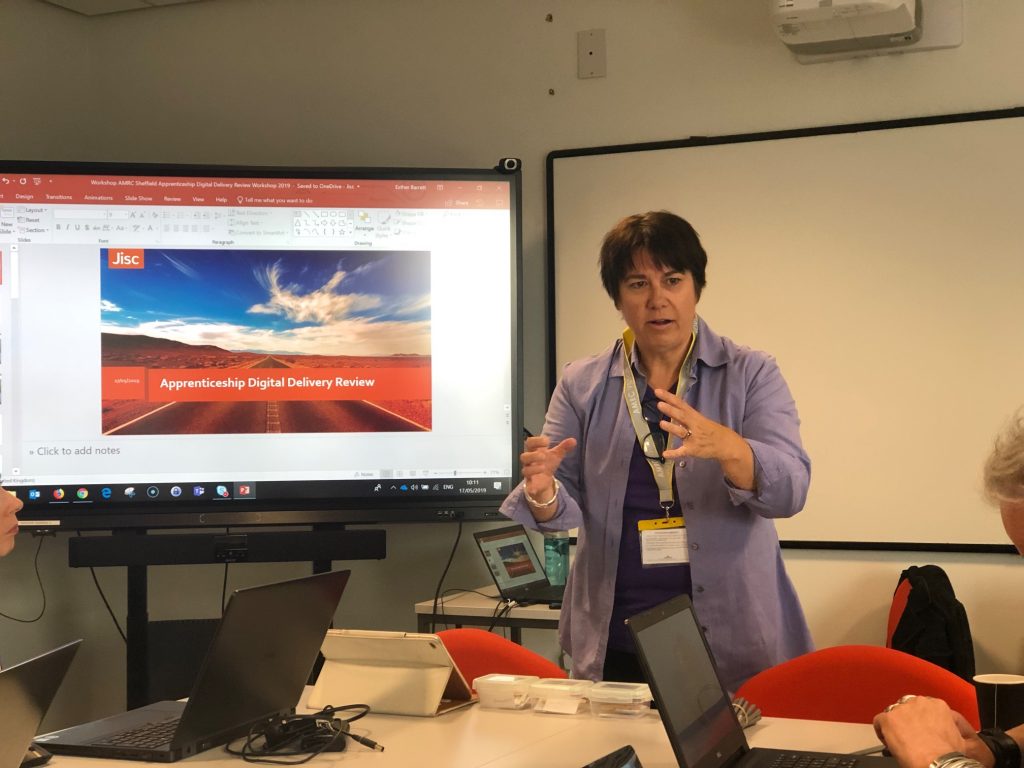

The ADDR is a bit different from other Jisc workshops in that it is a purely internal workshop for the institution or organisation concerned. Recently the Jisc team involved in rolling out the ADDR offering ran a pilot workshop to test the format with the folk from the Advanced Manufacturing Research Centre (AMRC) – which is part of the University of Sheffield.

The AMRC offers a whole range of apprenticeships from L2, L3 , BTEC. HNC. through to 3-year degree apprenticeships and are looking to add Masters level apprenticeships. The centre is one of the Catapult centres established by Innovate UK to transform the UK’s capability for innovation in specific areas – in the case of Sheffield, advanced manufacturing.

We met up with a wide range of people from the university and the AMRC itself. Being on a site away from the main campus and being, in addition, a somewhat specialised outfit, it was good that there were people from the MIS function and the Library present, as the relationship of the AMRC to those centrally provided services was going to be one of the interesting questions that would need to be examined.

Esther Barrett explains the ADDR process

The bulk of the work of the ADDR is done by the people from the organisation. The Jisc team is there to facilitate to help keep focus and suggest formats that can be used to surface the areas that need attention. One of these is the use of some small cards to prioritise areas of activity. The team provided a some with topics already suggested and a stack of blank cards for writing in additional topics.

The AMRC team get prioritising

There followed a session when the two teams (they had split themselves into two teams to focus on different parts of the apprenticeship delivery process) presented their choices to the other. This led to further refinement and grouping of issues into a number of broad headings with sub-issues underneath them.

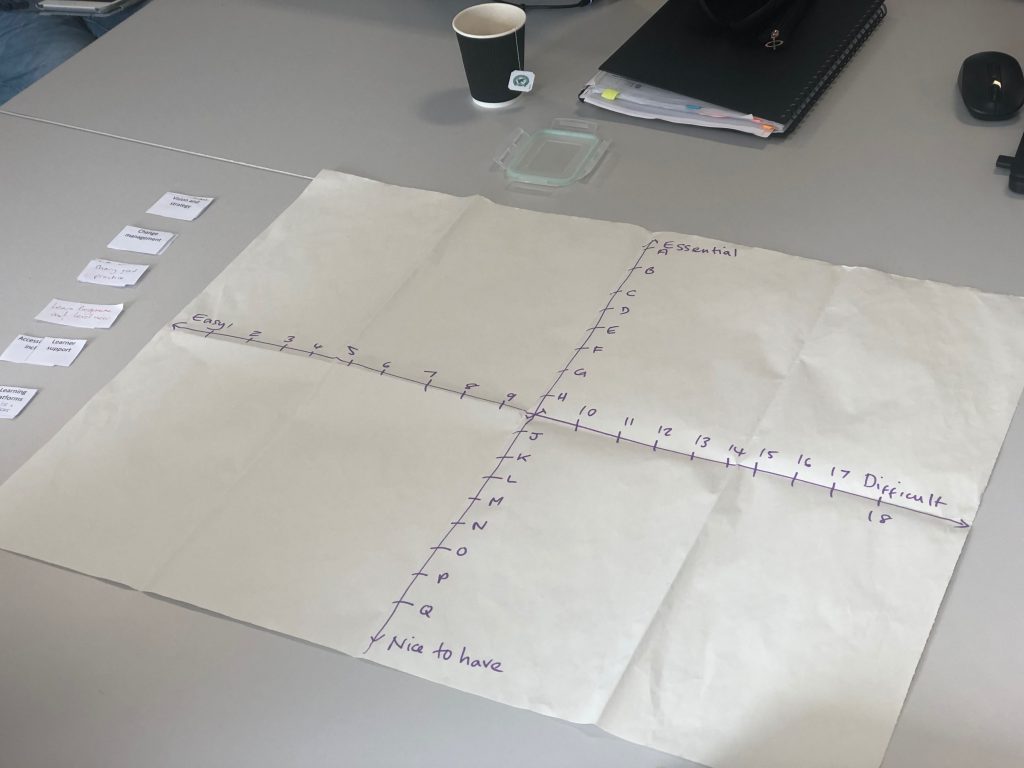

The next stage was to place these issues on a grid – with axes of easy to difficult and nice to have to essential. The teams then discussed the placement – often surfacing blockers or dependencies as they did.

The magic grid

Once placed and debated, the teams had arrived at the next step which was to start creating the action plans to take forward the necessary steps to realise the desired goals.

This workshop wrapped up good and early with some strong action plans being developed and the promise of further conversations to come, which was proof fo the positive energy and and intention that the participants brought the workshop.

We will follow them up a few months to see where things have got to.

If you would like to find out more about the ADDR process, then please contact:

- Esther Barrett – Subject specialist (teaching, learning and assessment) – esther.barrett@jisc.ac.uk

- Rob Bristow – Senior co-design manager – rob.bristow@jisc.ac.uk