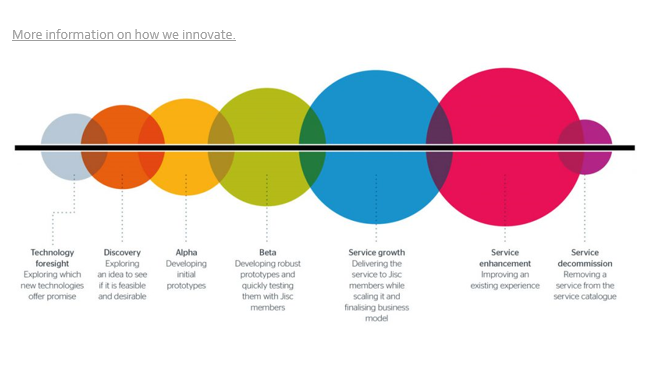

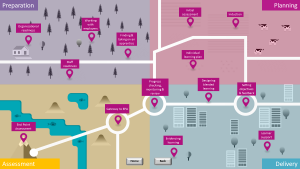

Earlier this year we launched the beta version of our provider toolkit ‘Apprenticeship journey in a digital age’. The purpose of the toolkit is to show how effective use of digital technologies can help in the delivery of the new apprenticeship standards. It is aimed at colleges and training providers and has a ‘dip in’ format that is designed to accommodate the needs of a range of staff: senior managers may need to review the overview sections while other staff may drill down to specific content relevant to their practice.

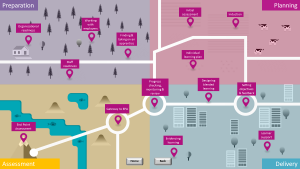

Mindful of the pressure on the working lives of staff in the sector, this more visual format is a deliberate approach designed to make our guidance more easily accessible, breaking down the apprenticeship journey into four stages of preparation, planning, delivery and assessment. For each stage we offer a succinct overview of how digital technologies can support training providers and enhance the apprenticeship experience with access to case studies, further reports and guidance.

As part of the development process we have sought feedback from providers and stakeholders over the summer. Through a series of interviews and workshops we have engaged with over 100 people. Overall, the feedback has been very positive.

Review feedback – what users liked

Users really like the succinct and visual format and found it helpful to get a high level overview of each topic. The layout and ease of access of the resource was well-received and the topic headings reflected sector needs. The positive way in which digital opportunities are highlighted was helpful along with the surfacing of common issues and the easy access to relevant additional resources on specific topics.

Provider case studies were welcomed with the proviso that users really value the ‘warts and all’ approach to case studies – they want to see how challenges have been overcome and to know the pitfalls to avoid.

The graphical elements of the guide provoked a very ‘marmite’ response with differing views – many found it innovative and felt it helped to convey the overall journey but some did not understand the concept and didn’t find the iconography helpful – we are working on these aspects!

And what they didn’t like or would like more of

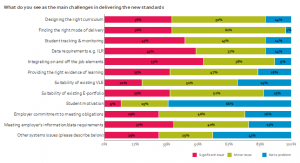

Understandably, there are concerns about currency as policy and practice in apprenticeship delivery evolves. To some extent, we are reliant on the sector being sufficiently confident and willing to share practice that they find to be effective as we develop the toolkit further.

Users requested more examples from curriculum areas that are regarded as ‘hard to reach’ but acknowledged that this is difficult without consensus as to which curriculum areas fall into this category.

Some felt that the resources linked to from the toolkit are still very text-based, sometimes rather ‘wordy’ and don’t reflect the variety of digital formats available. This is something to address as we gather future examples of best practice and build a broader range of digital assets.

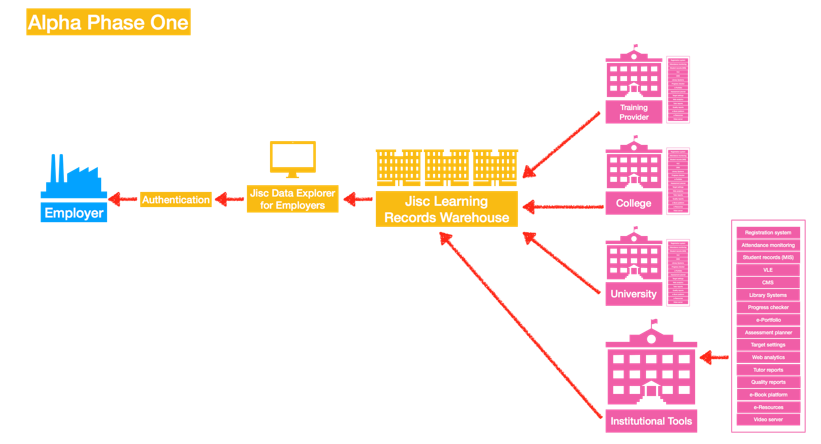

Some smaller providers are seeking further guidance on developing an appropriate digital infrastructure. The breadth of practice in the sector and the different technological starting points means it is difficult to address the wide spectrum of issues providers face. The screen offering a ‘Technology view’ is perhaps the most challenging aspect of the resource and we are taking further guidance on this aspect.

Next steps and how you can get involved

We are working with a specialist web design company to develop the resource, to bring the concept to life and to address some of the issues raised. We hope to launch the next version early in 2018 but in the meantime, please do get in touch with Lisa Gray if you have an example of effective use of digital interventions relating to apprenticeship delivery to share.

Higher or degree apprenticeships

We are exploring how digital technologies can best support the delivery of the new apprenticeships at higher and degree level and held a ‘think tank’ event to explore this with providers on 14 November 2017. See our blog post on the higher and degree apprenticeships project.

In the meantime, if you are involved with, or considering, the delivery of higher or degree apprenticeships we would be grateful if you would complete our short survey to share your experiences with us and help us to better understand your issues and priorities.